To have a transparent understanding of how the decode() technique works in Python, check out its syntax.

Syntax of the decode() Methodology

The syntax is outlined as :

stringVar.decode(encodeFormat, errorMode)

Inside this syntax:

- stringVar is the string that has been beforehand encoded and must be decrypted again to the unique type.

- encodeFormat defines the encoding format that was used to encode the string initially

- errorMode defines the error-handling mode to make use of whereas making an attempt to decode the string into its authentic type.

Now that you’re conversant in the syntax of the decode() technique let’s check out some examples.

Instance 1: Decoding a Merely Encoded String

On this instance, you’re going to attempt to decode() a string that has been encoded by the encode() technique with out specifying the encoding format. To do that, first, encode a string utilizing the next code snippet:

encodeStr= stringVar.encode()

Let’s print out the encoded string utilizing the next line:

print(“The Encoded String is as: “,encodeStr)

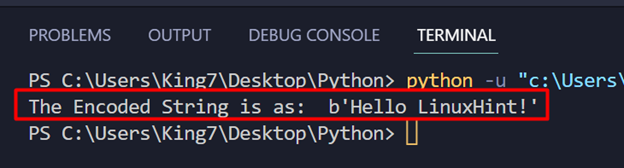

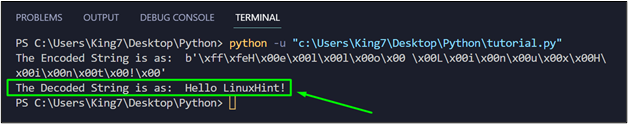

This system, at the moment, provides the next output:

After that, apply the decode() technique and print it on the terminal utilizing the print technique:

stringDec = encodeStr.decode()

print(“The Decoded String is as: “,stringDec)

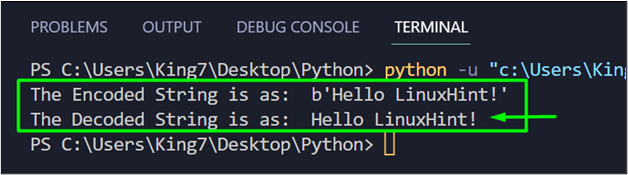

While you execute the code now, it’s going to produce the next consequence on the terminal:

You’ve got efficiently used the decode() technique to the unique non-encoded string in Python.

Instance 2: Decoding a String With Particular Encoding Format

To display the working of the decode() technique on a string that has been encoded with a particular encoding format, take the next strains of code:

encodeStr= stringVar.encode(encoding=“utf16”)

print(“The Encoded String is as: “,encodeStr)

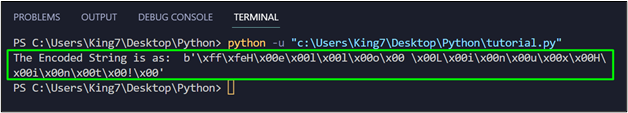

At this level, when this code snippet is executed, it’s going to produce the next output on the terminal:

In case you attempt to apply the decode() technique with specifying the encoding format:

stringDec = encodeStr.decode()

print(“The Decoded String is as: “,stringDec)

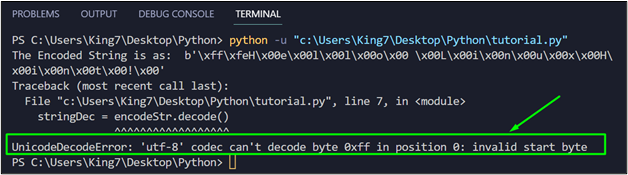

It’ll produce the next error on the terminal:

Due to this fact, the proper code for decoding this string is as:

stringDec = encodeStr.decode(“utf16”)

print(“The Decoded String is as: “,stringDec)

This time round, when the whole code snippet is executed, it’s going to produce the next consequence on the terminal:

You’ve got efficiently decoded a string which had been encoded with a particular encoded string.

Conclusion

The decode() technique in Python is used to decode a string that has been encoded with a particular format. This technique takes two arguments that are each choices, the primary one is the encoding kind, and the second is the error-handling mode. If no arguments are supplied, then the decode() technique units the encoding format to “utf8”.